Regularizing Action Policies for Smooth Control with Reinforcement Learning

Abstract

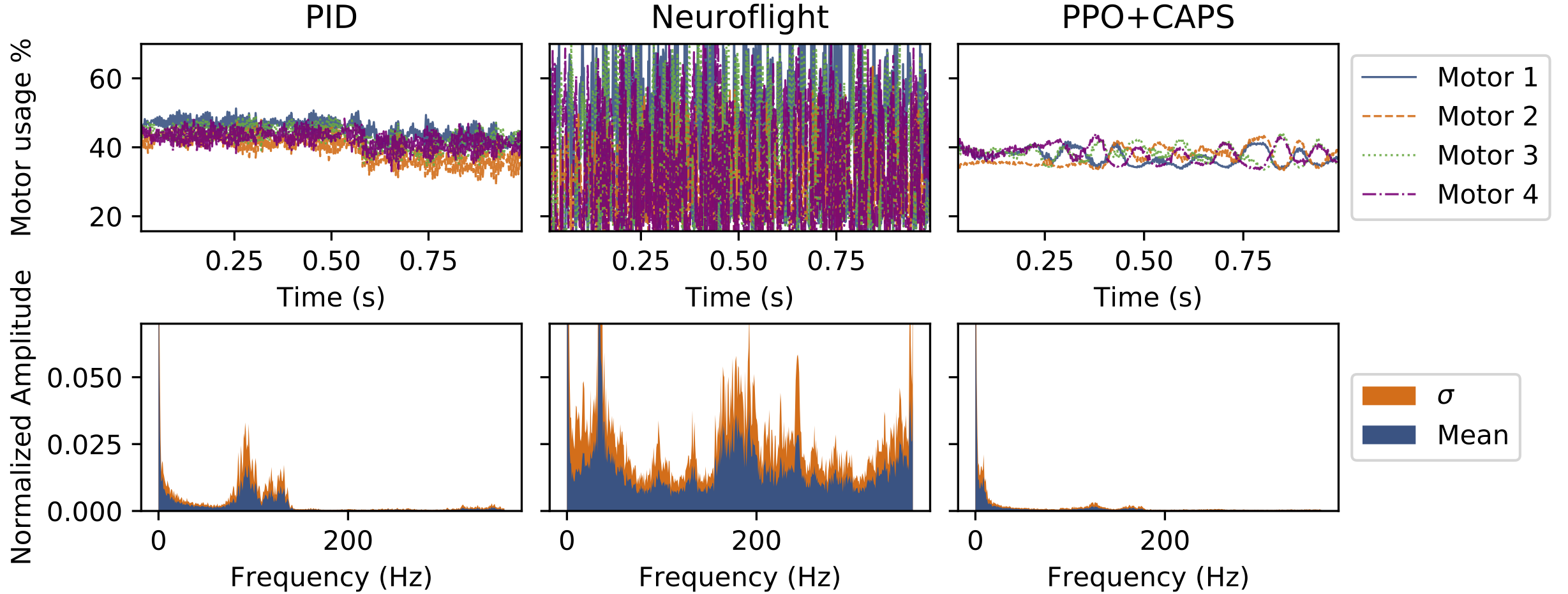

A critical problem with the practical utility of controllers trained with deep Reinforcement Learning (RL) is the notable lack of smoothness in the actions learned by the RL policies. This trend often presents itself in the form of control signal oscillation and can result in poor control, high power consumption, and undue system wear. We introduce Conditioning for Action Policy Smoothness (CAPS), a simple but effective regularization on action policies, which offers consistent improvement in the smoothness of the learned state-to-action mappings of neural network controllers, reflected in the elimination of high-frequency components in the control signal. Tested on a real system, improvements in controller smoothness on a quadrotor drone resulted in an almost 80% reduction in power consumption while consistently training flight-worthy controllers.

This work has been accpeted for publication and is set to appear at the International Conference on Robotics and Automation (ICRA) 2021.

Overview

One of the key issues with using RL for control is that RL can learn really noisy control. This is because RL algorithms do not typically optimize policies for smoothness -- the Markov property in RL assumes complete independence of state-action pairs. This assumption does not really hold in real applications however, as it is in fact beneficial to have some cohesion between how different states are mapped to actions. We introduce CAPS as a tool for regularizing policy optimization to promote action smoothness. CAPS can dramatically improve action policy smoothness, as shown in the figure below.

Method

To enable smoother control, we introduce 2 smoothness losses to the RL policy optimization criteria:

- Temporal Smoothness, to ensure that subsequent actions are similar to current actions: $$L_T = D(\pi(s_t), \pi(s_{t+1}))$$

- Spatial Smoothness, which ensures that similar states map to similar actions: $$L_S = D(\pi(s), \pi(\bar{s}))$$ where $\bar{s} \sim \phi(s)$is drawn from a distribution around $s$

Results

When tested on a simple toy problem (see Supplementary for details), where the ideal response (green) is known, but is approximately solvable, though less optimally, by a binary response (red), we show that a CAPS agent has behavior closer to the ideal response as opposed to vanilla policies which are closer to the binary response (which likely contributes to vanilla policies being noiser).

We also test our method on OpenAI Gym benchmarks, where CAPS produced smoother responses on all tested environments. The benefits of CAPS are also visually noticable in some tasks, as shown by the example of the pendulum task below. Additional resutls are provided in our paper and in the supplementary material.

|

|

To test real-world performance on hardware, we also tested CAPS on a high-performance quadrotor drone, the schematics and training for which were based on Neuroflight. CAPS agents allowed for training with simpler rewards and still achieves comprable tracking performance while offering over 80% reduction in power consumption and over 90% improvement in smoothness (more details in our paper).

We compare the FFTs and motor usage for CAPS vs that of Neuroflight and a tuned PID controller. Observe the significant improvement in control smoothness -- which is also reflected by the FFT.

Code

For the time being, a zipped copy of the code as used for the benchmarks and toy problem is available via the source below. We will make code available on github soon, which will also include code for the drone training.

Reference

If you find this useful in your work please consider citing:

@inproceedings{caps2021,

title={Regularizing Action Policies for Smooth Control with Reinforcement Learning},

author={Siddharth Mysore and Bassel Mabsout and Renato Mancuso and Kate Saenko},

journal={IEEE International Confernece on Robotics and Automation},

year={2021},

}