Visual Domain Adaptation Challenge

(VisDA-2021)

[Overview] [Data] [Prizes] [Evaluation] [Rules] [FAQ] [Organizers] [Sponsors]Workshop

Click [here] to learn about our VisDA 2022 Challenge.

Please join our [mailing list] for official announcements!

We are happy to announce that the live breakout session of the VisDA-2021 NeurIPS workshop took place on Tuesday, December 7th 2021 at 20:00 GMT. Presentations, recordings, and techincal reports can be found below. Leaderboard| # | Team Name | Affiliation | ACC / AUROC |

|---|---|---|---|

| 1 | babychick [video] [slides] [pdf] | Shirley Robotics Burhan Ul Tayyab, Nicholas Chua | 56.29 / 69.79 |

| 2 | chamorajg [video] [slides] [pdf] | - Chandramouli Rajagopalan | 48.49 / 76.86 |

| 3 | liaohaojin [video] [pdf] | Beijing University of Posts and Telecommunications Haojin Liao, Xiaolin Song, Sicheng Zhao, Shanghang Zhang, Xiangyu Yue, Xingxu Yao, Yueming Zhang, Tengfei Xing, Pengfei Xu, Qiang Wang | 48.49 / 70.8 |

- "Not All Networks Are Born Equal for Robustness" by Cihang Xie [video]

- "Natural Corruption Robustness: Corruptions, Augmentations and Representations" by Saining Xie [video]

- "Challenges in Deep Learning: Applications to Real-world Ecological Datasets" by Zhongqi Miao [video]

Overview

Progress in machine learning is typically measured by training and testing a model on the same distribution of data, i.e., the same domain. However, in real-world applications, models often encounter out-of-distribution data, such as novel camera viewpoints, backgrounds or image quality. The Visual Domain Adaptation (VisDA) challenge tests computer vision models’ ability to generalize and adapt to novel target distributions by measuring accuracy on out-of-distribution data.

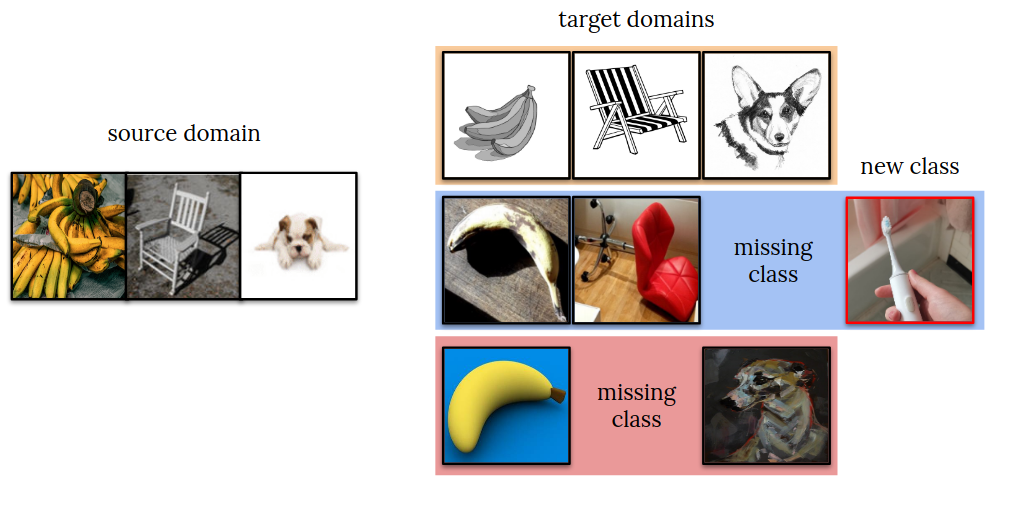

The 2021 VisDA competition is our 5th time holding the challenge! [2017], [2018], [2019], [2020]. This year, we invite methods that can adapt to novel test distributions in an open-world setting. Teams will be given labeled source data from ImageNet and unlabeled target data from a different target distribution. In addition to input distribution shift, the target data may also have missing and/or novel classes as in the Universal Domain Adaptation (UniDA) setting [1]. Successful approaches will improve classification accuracy of known categories while learning to deal with missing and/or unknown categories.

Announcements:

- June 23rd: the official devkit and with data urls are released on [github].

- July 7th: the evaluation server and the leaderboard are up on [codalab]

- July 26th: the technical report describing the challenge is avaliable on [arxiv].

- July 28th: the [prize fund] is finalized

- Aug 10th: the [rules] regarding ensembling and energy efficiency are updated.

- Sept 30th: [test data] and an example submission are released, test leaderboard is live.

Data

- Training set: images and object labels from the standard ImageNet 1000-class training dataset. No other training data is permitted, but data augmentation is allowed (see rules).

- Development set: images and labels sampled from ImageNet-C(orruptions), ImageNet-R(enditions) and ObjectNet. The dev set can be used for model development, but cannot be used to train the final submitted model, only to validate it to tune hyperparameters. The dev domain contains some (but not necessarily all) source classes and some novel classes.

- Test set: similar to the development set but with a different input distribution and category composition. No labels are released for the test set. Download links: [test images], [example submission] with correct file order for the [test leaderboard].

@misc{visda2021,

title={VisDA-2021 Competition Universal Domain Adaptation to Improve Performance on Out-of-Distribution Data},

author={Dina Bashkirova and Dan Hendrycks and Donghyun Kim and Samarth Mishra and Kate Saenko and Kuniaki Saito and Piotr Teterwak and Ben Usman},

year={2021},

eprint={2107.11011},

archivePrefix={arXiv},

primaryClass={cs.LG}

}Prizes

The top three teams will receive pre-paid VISA gift cards: $2000 for the 1st place, $500 for 2nd and 3rd.

Evaluation

We will use CodaLab to evaluate submissions and maintain a leaderboard. A team’s score on the leaderboard will be determined using a combination of two metrics: classification accuracy (ACC) and area under the ROC curve (AUC). The accuracy is computed on the known (inlier) categories only. The AUC measures unknown (outlier) category detection and is the area under the ROC curve computed by thresholding a score that represents how likely the input is to be an unknown class. The detailed instructions and a development kit with specific details on submission formatting and computing the evaluation metrics can be found in the official [github repo]. The evaluation server and the leaderboard are live [at codalab].Rules

The VisDA challenge tests adaptation and model transfer, so the rules are different from most other challenges. Please read them carefully.- Supervised Training: Teams may only submit test results of models trained on the source domain data. To ensure equal comparison, we do not allow training on any other external data or any form of manual data labeling.

- Unsupervised training: Models can be adapted (trained) on the test data in an unsupervised way, i.e. without labels.

- Source-only Models: The performance of a domain adaptation algorithm greatly depends on the baseline performance of the model trained only on source data. For example, ResNet-50 will work better than AlexNet. We ask that teams submit two sets of results: 1) predictions obtained only with the source-trained model, and 2) predictions obtained with the adapted model. Note that the source-only performance will not affect the ranking. See the development kit for submission formatting details.

- Leaderboard: The main leaderboard for each competition track will show results of adapted models and will be used to determine the final team ranks. We compute the full ranking for each metric and take the average over rankings, and break ties using ACC. For example, if Alice is 1st in terms of ACC and 3rd in terms of AUC (2.0 average ranking), and Bob is 2nd in both (2.0 average ranking), and Carol is 2nd in ACC and 3rd in AUC ranking (2.5 average ranking), then Alice and Bob have a tie, but Alice wins because her ACC raking is higher, so the final ranking is: Alice (1st), Bob (2nd), Carol (3rd).

- Model size: To encourage improvements in universal domain adaptation, rather than general optimization or underlying model architectures, models must be limited to a total size of 100 million parameters.

- Ensembling: Ensembling is now allowed, but each additional forward pass through a parameter will count towards the total number of parameters allowed (100M). For example, an ensemble that passes through a 50M model twice will count as 100M towards the parameter cap.

- Pseudo-labeling: If you use an additional step (e.g. a pseudo-labeling model) for trainig, but you do not use it during inference, it does not count towards the 100M parameter limit.

- Energy efficiency: Teams must report the total training time of the submitted model which should be reasonable (which we define as not exceeding 100 GPU days of V100 (16GB version) but contact us if unsure). Energy efficient solutions will be highlighted even if they do not finish in the top three.

FAQ

- Where do we download data and submit results? The submission devkit and evaluation server will be announced soon. Please sign up for our mailing list to receive email updates.

- Can we train models on data other than the source domain? No. Participants may only train their models on the official training set, i.e. the training set of ImageNet-1K. The final submitted model may not be trained on the dev set, but its hyper-parameters may be set on the dev set.

- What data can we use to tune hyper-parameters? Only the provided dev set can be used to tune hyper-parameters. Tuning hyper-parameters on other datasets disqualifies the submission.

- Can we assign pseudo labels to the unlabeled data in the target domain? Yes, assigning pseudo labels is allowed as long as no human labeling is involved.

- Can we use data augmentation? Yes, except for a [blacklist]. Data augmentation techniques that modify the original training image, such as adding noise to it or cropping, are allowed. The only exception is exact corruptions used in ImageNet-C, see the [blacklist].

- Do we have to use the provided baseline models? No, these are provided for your convenience and are optional.

- How many submissions can each team submit per competition track? For the validation domain, the number of submissions per team is limited to 20 upload per day and there are no restrictions on the total number of submissions. For the test domain, the number of submissions per team is limited to 1 upload per day and 5 uploads in total. Only one account per team must be used to submit results. Do not create multiple accounts for a single project to circumvent this limit, as this will result in disqualification.

- Can multiple teams enter from the same research group? Yes, so long as teams do not have any overlapping members, i.e. one person can only participate in one team.

- Can external data be used? No.

- Are challenge participants required to reveal all the details of their methods? Yes, but only the top performing teams. To qualify to win, teams are required to include a four+ page report describing their methods and submit their code and model so that we can reproduce their results. The winners’ reports will be posted on the challenge website; open sourcing the code is optional but highly encouraged.

Sponsors